DARPA Funded Study Using AI Developed at an Israeli University to Identify Violations of 'Social Norms'

DARPA funded a study that used an AI system created by Israeli researchers at Ben Gurion University to identify "violations of social norms" in text. The AI reviewed a bunch of content and ranked the content in 10 categories that represent values like shame, guilt, pride, and embarrassment.

Ben-Gurion University has a history of partnerships with DARPA. In 2012, Israel was only foreign led team that competed in a DARPA robotics competition to receive a DARPA development stipend:

Prof. Yari Neuman helped develop a “novel” semantics-based model to assess, predict, and evaluate personality. Neuman has prior associations with the Homeland Security Institute and has written and co-authored several papers applying the Neuman-Cohen language model:

In 2012, Neuman wrote that automatic text analysis could be used to screen for depression:

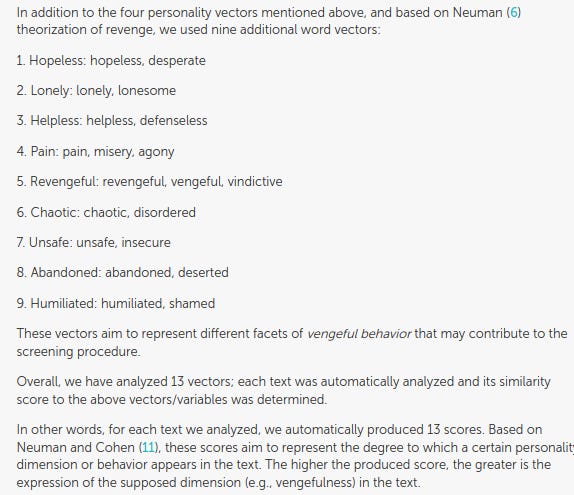

Neuman mentions in a 2014 paper that military intelligence has expressed an interest in vectorial semantics to profile political leads and has been successfully used in forensic psychology to “screen” for potential mass murderers:

“Following this logic, in this paper, we present a novel approach to personality assessment, which is based on the idea of vectorial semantics models (VSM)11–12, explained in the next section. The idea of using a vectorial semantics approach to personality assessment emerged in the context of profiling political leaders for military intelligence, and was recently and successfully used in the context of forensic psychiatry for the screening of potential mass-murderers. This study represents the first time in which this approach is validated against the ‘‘big-five’’ personality factors and by using thousands of essays written by students.”

In a paper entitled Profiling school shooters: automatic text-based analysis Neuman sought to use vectorial semantics model to assess the personality of school shooters based on manifestos written by six school shooters. He envisioned a future where a team of mental health experts could flag students based on their social media posts:

-“The profiling of school shooters should be informative in the sense that it can be used for future screening of potential offenders. Such a screening procedure may identify candidates for (1) in-depth personal diagnosis and (2) preventive steps to be taken by mental health practitioners and law enforcement agencies.”

-"the fact that our methodology is automatic allows us to screen a massive number of texts in a short time. While ethical considerations are inevitable, we can definitely imagine a situation in which parents give the school permission to scan their teenagers’ social media pages under certain limitations.

In this context, using our automatic screening procedure, a qualified psychiatrist or psychologist, who will be trained to work with such a procedure, may automatically get red flag warnings for students whose texts express a high level of potential danger. While this methodology does not provide the magic bullet for identifying potential offenders, and should be cautioussly used given the unknown percentage of false alarms, it clearly presents one pragmatic approach that can be further developed in order to gain better results for screening and prevention. In fact, the problem of “false positives” is an unresolved issue in screening for a small number of potential offenders. We do not pretend to solve this problem but introduce a methodology that like other methodologies addressing similar challenges, should be used with the highest degree of sensitivity in a reasonable context of decision making, prices, and alternatives.”

The study made subjective categories overlapping with emotions associated with mental health disorders:

DARPA Funding Using Israeli AI

“Identifying social norms and their violation is a challenge facing several projects in computational science. This paper presents a novel approach to identifying social norm violations. We used GPT-3, zero-shot classification, and automatic rule discovery to develop simple predictive models grounded in psychological knowledge. Tested on two massive datasets, the models present significant predictive performance and show that even complex social situations can be functionally analyzed through modern computational tools.

“First, we have a limited purpose which is mainly methodological: To develop and test an AI-based methodology that can identify the violation of social norms rather than the norms themselves….It is therefore hypothesized that certain social emotions may indicate a social norm violation and may be used for this paper’s main task, which is the classification of cases involving norm violation/confirmation.”

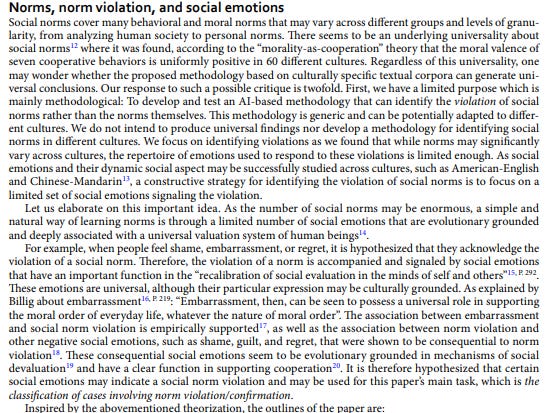

The 10 social norms the study created were:

Conclusion of the study:

“Social norms are either descriptive, representing the prevalence of a certain behavior (e.g., avoiding tax payment), or injunctive, representing the extent to which the behavior is approved by a relevant reference group. As social animals, human beings are specifically sensitive to the valuation of others and hence to injunctive norms and their violation, which is accompanied by social emotions such as guilt (e.g., 34). While social norms may be culturally specific and cover numerous informal “rules”, how people respond to norm violation through evolutionary-grounded social emotions may be much more general and provide us with cues for the automatic identification of norm violation.

In this paper, we have developed and tested several models for automatically identifying the violation of social norms. One would hardly find papers dealing with the automatic identification of social norm violations. Those focusing on norm violation usually concern norms of interaction in specific communities such as Reddit. This scarcity of research in identifying and recognizing social norm violations points to the fact that the automatic identification of social norm violations is an open challenge.

We have shown that through (1) the measurement of social emotions and social norms in textual data (2) zero-shot classification, (3) the use of the measured features in the automatic-rule discovery algorithm, and (4) the use of the discovered rules for generating simple features used in a Decision Tree model, may provide substantial improvement in the prediction of social norm violation. Moreover, using GPT-3, and domain expertise, we were able to identify top-level categories of social norms. Using the top-level categories of social norms, we were able to correctly identify the exact norm that has been violated. However, the number of social norms that we tested in the dataset was limited. The paper is, therefore another instance in which modern large language models, such as GPT-3, as combined with the domain expertise of a discipline (e.g., psychology), may advance research in psychology, the social sciences, and the humanities. Our paper presents some preliminary results but will be developed in future studies to include the identification of norm violation in conversation, the use of multi-modality for social norm violation, and the use of large language models to identify culturally specific norms.

Our study is limited to developing tools for identifying the violation of “general” social norms. However, the granularity level of norms may span from social groups to the individual level. In this context, it was argued that “people have preferences for following their ‘personal norms’ what they believe to be the right thing to do” and that personal norms may be a powerful explanatory idea in understanding human unselfish behavior. This idea may be tested using tools of computational personality analysis where relatively stable patterns of thought, emotion, and behavior (i.e., personality) may be extended to include personal norms and the tendency to follow them. For instance, the representation of others (i.e., beliefs about others), is considered to be a major dimension in understanding human personality and it has been measured through novel tools of AI for a better understanding of fictional characters. In fact, and in the context of personal norms, suggest that AI may be used “to better navigate the complex landscape of human morality and to better emulate human decision-making” even in contexts governed in the past by different methodologies such as the behavioral economics. One may hypothesize, for instance, that a person holding negative paranoid beliefs of others and following conspiracy theories may be less prone to express one-shot anonymous unselfishness when he considers his interlocutor to belong to out-group players. It is possible to measure the variability of norms using AI and language-based models tools. As explained by, "“what matters is not just the monetary payoffs associated with actions, but also how these actions are described”. For example, automatically analyzing a massive amount of textual data following Hurricane Catarina could have tested whether cooperative actions are described differently by people with different moral norms. It could have been hypothesized that those with more negative beliefs about other out-group members are more inclined to hold different norms. This hypothesis aligns with findings. It may suggest that those individuals may also describe cooperative actions in a less favorable language and may be more inclined to behave less cooperatively (e.g. fewer donations for charity outside their reference group). In addition, our paper is limited by the inevitable choice of datasets, theoretical approaches, and ML models. Therefore, the results presented here are preliminary and should be modestly limited to the specific context of this study.”